The Importance of AI TRiSM Today

AI Trust, Risk, and Security Management Explained

- AI TRiSM (AI Trust, Risk, and Security Management) is a structured framework for governing, securing, and monitoring AI systems across their lifecycle.

- AI TRiSM addresses critical challenges such as model risk, bias, explainability gaps, regulatory compliance, and AI-driven security threats.

- As AI adoption accelerates, organizations need continuous governance and observability, not one-time controls.

- AI TRiSM is rapidly becoming foundational for enterprises deploying generative AI, machine learning, and autonomous systems at scale.

AI Trust, Risk, and Security Management (AI TRiSM) is emerging as one of the most important disciplines in modern cybersecurity and enterprise risk management. As organizations embed AI into core business processes, decision-making, and customer-facing systems, the risk surface expands far beyond traditional IT security.

AI systems now influence credit approvals, hiring decisions, fraud detection, healthcare diagnostics, industrial automation, and national infrastructure. Without proper guardrails, these systems can introduce unintended bias, opaque decision-making, regulatory

exposure, and new attack vectors.

This is where AI TRiSM plays a critical role.

In this article, we explore what AI TRiSM is, why it matters, how it works, and how organizations can operationalize it responsibly.

What Is AI Trust, Risk, and Security Management (AI TRiSM)?

Gartner defines AI TRiSM as a framework that enables organizations to ensure trust, fairness, robustness, security,

privacy, and governance across AI systems.

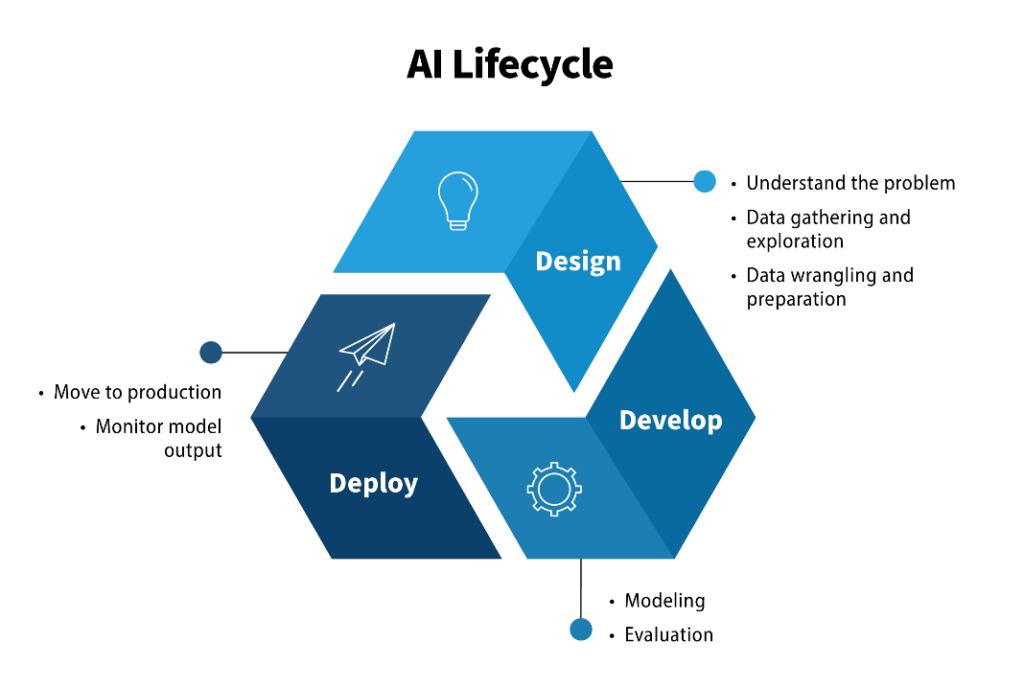

At its core, AI TRiSM focuses on managing AI risk across the entire lifecycle, including:

- Data collection and training

- Model development and validation

- Deployment and runtime behavior

- Ongoing monitoring, auditing, and compliance

Rather than treating AI as just another application, AI TRiSM recognizes that AI systems behave probabilistically, evolve over time, and can make decisions that directly impact people, finances, and safety.

Why AI TRiSM Is Critical Today

1. Real-World AI Failures and Risk Exposure

AI systems have already demonstrated how quickly technical flaws can turn into societal and organizational crises.

Examples include:

- Algorithmic bias leading to unfair targeting or exclusion.

- AI hallucinations producing false or misleading outputs.

- Automated decisions made without explainability or accountability.

When AI outcomes are incorrect or unethical, the consequences are not just technical, they are legal, financial and reputational.

2. The Rapidly Evolving AI Regulatory Landscape

Governments and regulators worldwide are introducing AI-specific laws and frameworks focused on:

- Transparency and explainability

- Data privacy and consent

- Accountability for automated decisions

- Risk classification of AI systems

Organizations must now demonstrate continuous compliance, not just policy intent. AI TRiSM provides the structure to operationalize these requirements.

AI systems themselves are now targets of attack, including:

- Data poisoning during training

- Model manipulation and adversarial inputs

- Prompt injection and misuse of generative AI

- Abuse of AI for phishing, impersonation, and social engineering

Traditional security tools are not designed to understand AI behavior or AI-specific risks, making AI TRiSM essential for modern defense strategies.

Key Benefits of Implementing AI TRiSM

AI TRiSM establishes security controls that protect:

- Training data and model integrity

- Inference pipelines and APIs

- AI decision workflows from manipulation

This reduces the likelihood of AI systems being exploited or weaponized.

Trustworthy AI requires:

- Explainable decision paths

- Visibility into model behavior

- Clear accountability mechanisms

AI TRiSM ensures that AI decisions can be understood, justified, and audited, building trust with users, regulators and stakeholders.

Operational Resilience and Scalability

With AI TRiSM in place, organizations can:

- Safely scale AI adoption

- Detect performance drift early

- Continuously improve AI reliability

This enables innovation without sacrificing control.

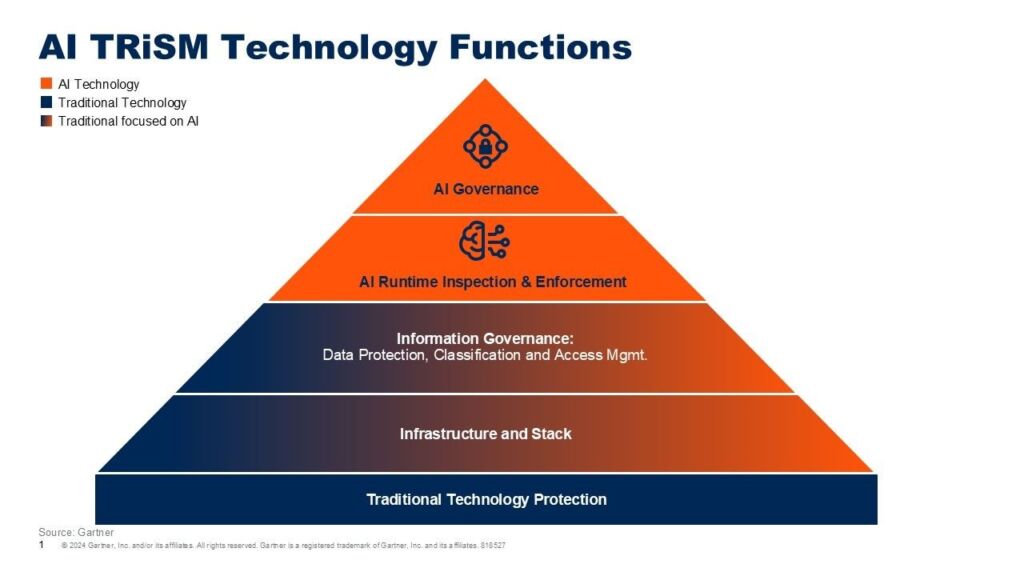

The AI TRiSM framework is typically built around four foundational pillars.

1. Explainability and Model Monitoring

This pillar focuses on:

- Making AI decisions interpretable

- Tracking model accuracy and drift

- Detecting bias or unexpected behavior

Continuous monitoring ensures AI systems behave as intended even as data patterns change.

2. Model Operations (ModelOps)

ModelOps governs the operational lifecycle of AI models, including:

- Version control and deployment

- Performance benchmarking

- Controlled updates and rollback

This prevents untested or degraded models from impacting production systems.

AI applications introduce new security challenges, such as:

- Shadow AI tools used without approval

- Exposed inference APIs

- Unauthorized access to models and data

AI TRiSM enforces security controls that protect AI assets just like critical infrastructure.

4. Privacy and Data Protection

AI systems often rely on sensitive or personal data. This pillar ensures:

- Lawful data collection and usage

- Anonymization and minimization

- Compliance with data protection regulations

Privacy-aware AI is not optional, it is a regulatory and ethical necessity.

Key AI TRiSM Actions Organizations Should Take

Establish a Dedicated AI TRiSM Task Force

AI governance cannot sit in a single department. Effective AI TRiSM requires collaboration across:

- Security and risk teams

- Data science and engineering

- Legal and compliance

- Business leadership

This ensures AI risks are addressed holistically, not in silos.

Prioritize Explainability and Interpretability

Organizations should adopt tools and practices that:

- Explain why models made specific decisions

- Highlight key influencing variables

- Support audit and investigation needs

Explainability is central to ethical AI and regulatory defense.

Tailor Controls to AI Use Cases

Not all AI systems carry the same risk. AI TRiSM programs should:

- Classify AI systems by impact and criticality

- Apply stricter controls to high-risk use cases

- Continuously reassess risk as models evolve

Ensure Data and Model Integrity

Strong AI TRiSM programs protect:

- Training datasets from poisoning

- Models from unauthorized modification

- Outputs from manipulation

Integrity controls are essential for trustworthy outcomes.

AI TRiSM Use Cases in Practice

Fair, Transparent, and Accountable AI

Public sector and financial institutions increasingly use AI TRiSM to:

- Validate fairness of automated decisions

- Monitor ethical compliance

- Provide audit trails for regulators

Explainable AI in Healthcare and Science

In healthcare and life sciences, AI TRiSM enables:

- Clinically explainable AI outcomes

- Validation of cause-and-effect relationships

- Increased trust among professionals and patients

The Role of Platforms Like CyberSIO TRiSMIq

As AI TRiSM matures, organizations are moving from manual governance and disconnected tools to unified AI TRiSM platforms.

CyberSIO TRiSMIq is designed to support AI TRiSM initiatives by bringing together:

- AI governance and policy enforcement

- Continuous AI risk monitoring

- Security controls aligned to enterprise SOC workflows

- Trust, compliance, and explainability management

Rather than treating AI risk as a standalone problem, platforms like TRiSMIq help organizations embed AI TRiSM into existing security, risk, and compliance operations, making AI governance measurable, scalable, and actionable.

Final Thoughts: Why AI TRiSM Is Non-Negotiable

AI TRiSM is no longer a future concept. It is a foundational requirement for organizations deploying AI responsibly.

As AI systems grow more autonomous, interconnected, and influential, the ability to trust, secure, and govern AI at scale will define which organizations succeed and which face unacceptable risk.

AI TRiSM provides the roadmap to innovate with confidence, without compromising security, ethics, or compliance.